Imagine scrolling through social media or playing an online game, only to be interrupted by insulting and harassing comments. What if an artificial intelligence (AI) tool stepped in to remove the abuse before you even saw it?

This isn’t science fiction. Commercial AI tools like ToxMod and Bodyguard.ai are already used to monitor interactions in real time across social media and gaming platforms. They can detect and respond to toxic behaviour.

The idea of an all-seeing AI monitoring our every move might sound Orwellian, but these tools could be key to making the internet a safer place.

However, for AI moderation to succeed, it needs to prioritise values like privacy, transparency, explainability and fairness. So can we ensure AI can be trusted to make our online spaces better? Our two recent research projects into AI-driven moderation show this can be done – with more work ahead of us.

Negativity thrives online

Online toxicity is a growing problem. Nearly half of young Australians have experienced some form of negative online interaction, with almost one in five experiencing cyberbullying.

Whether it’s a single offensive comment or a sustained slew of harassment, such harmful interactions are part of daily life for many internet users.

The severity of online toxicity is one reason the Australian government has proposed banning social media for children under 14.

But this approach fails to fully address a core underlying problem: the design of online platforms and moderation tools. We need to rethink how online platforms are designed to minimize harmful interactions for all users, not just children.

Unfortunately, many tech giants with power over our online activities have been slow to take on more responsibility, leaving significant gaps in moderation and safety measures.

This is where proactive AI moderation offers the chance to create safer, more respectful online spaces. But can AI truly deliver on this promise? Here’s what we found.

‘Havoc’ in online multiplayer games

In our Games and Artificial Intelligence Moderation (GAIM) Project, we set out to understand the ethical opportunities and pitfalls of AI-driven moderation in online multiplayer games. We conducted 26 in-depth interviews with players and industry professionals to find out how they use and think about AI in these spaces.

Interviewees saw AI as a necessary tool to make games safer and combat the “havoc” caused by toxicity. With millions of players, human moderators can’t catch everything. But an untiring and proactive AI can pick up what humans miss, helping reduce the stress and burnout associated with moderating toxic messages.

But many players also expressed confusion about the use of AI moderation. They didn’t understand why they received account suspensions, bans and other punishments, and were often left frustrated that their own reports of toxic behavior seemed to be lost to the void, unanswered.

Participants were especially worried about privacy in situations where AI is used to moderate voice chat in games. One player exclaimed: “my god, is that even legal?” It is – and it’s already happening in popular online games such as Call of Duty.

Our study revealed there’s tremendous positive potential for AI moderation. However, games and social media companies will need to do a lot more work to make these systems transparent, empowering and trustworthy.

Right now, AI moderation is seen to operate much like a police officer in an opaque justice system. What if AI instead took the form of a teacher, guardian, or upstander – educating, empowering or supporting users?

Enter AI Ally

This is where our second project AI Ally comes in, an initiative funded by the eSafety Commissioner. In response to high rates of tech-based gendered violence in Australia, we are co-designing an AI tool to support girls, women and gender-diverse individuals in navigating safer online spaces.

We surveyed 230 people from these groups, and found that 44% of our respondents “often” or “always” experienced gendered harassment on at least one social media platform. It happened most frequently in response to everyday online activities like posting photos of themselves, particularly in the form of sexist comments.

Interestingly, our respondents reported that documenting instances of online abuse was especially useful when they wanted to support other targets of harassment, such as by gathering screenshots of abusive comments. But only a few of those surveyed did this in practice. Understandably, many also feared for their own safety should they intervene by defending someone or even speaking up in a public comment thread.

These are worrying findings. In response, we are designing our AI tool as an optional dashboard that detects and documents toxic comments. To help guide us in the design process, we have created a set of “personas” that capture some of our target users, inspired by our survey respondents.

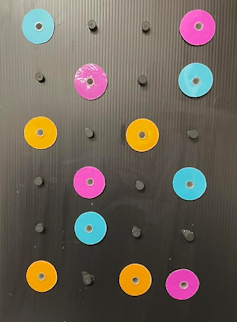

Some of the user ‘personas’ guiding the development of the AI Ally tool. Ren Galwey/Research Rendered

We allow users to make their own decisions about whether to filter, flag, block or report harassment in efficient ways that align with their own preferences and personal safety.

In this way, we hope to use AI to offer young people easy-to-access support in managing online safety while offering autonomy and a sense of empowerment.

We can all play a role

AI Ally shows we can use AI to help make online spaces safer without having to sacrifice values like transparency and user control. But there is much more to be done.

Other, similar initiatives include Harassment Manager, which was designed to identify and document abuse on Twitter (now X), and HeartMob, a community where targets of online harassment can seek support.

Until ethical AI practices are more widely adopted, users must stay informed. Before joining a platform, check if they are transparent about their policies and offer user control over moderation settings.

The internet connects us to resources, work, play and community. Everyone has the right to access these benefits without harassment and abuse. It’s up to all of us to be proactive and advocate for smarter, more ethical technology that protects our values and our digital spaces.

A farmer salvages their harvest from a flooded ricefield in Hanoi's Chuong My district on September 24, 2024 following serious flooding in Vietnam in the wake of Typhoon Yagi © Nhac NGUYEN / AFP

A farmer salvages their harvest from a flooded ricefield in Hanoi's Chuong My district on September 24, 2024 following serious flooding in Vietnam in the wake of Typhoon Yagi © Nhac NGUYEN / AFP

The floods were caused by record rainfall © Attila KISBENEDEK / AFP

The floods were caused by record rainfall © Attila KISBENEDEK / AFP When Storm Boris hit Austria, the Liesing creek's water rose up to the level where project manager Marlies Greussing stands © Alex HALADA / AFP

When Storm Boris hit Austria, the Liesing creek's water rose up to the level where project manager Marlies Greussing stands © Alex HALADA / AFP