Four hundred and thirty-two days prior to the election and 158 days before the Iowa caucus, millions of Americans will tune in for the second round of Democratic debates.

If this seems like a long time to contemplate the candidates, it is.

By comparison, Canadian election campaigns average just 50 days. In France, candidates have just two weeks to campaign, while Japanese law restricts campaigns to a meager 12 days.

Those countries all give more power than the U.S. does to the legislative branch, which might explain the limited attention to the selection of the chief executive.

But Mexico – which, like the U.S., has a presidential system – only allows 90 days for its presidential campaigns, with a 60-day “pre-season,” the equivalent of our nomination campaign.

So by all accounts, the U.S. has exceptionally long elections – and they just keep getting longer. As a political scientist living in Iowa, I’m acutely aware of how long the modern American presidential campaign has become.

It wasn’t always this way.

The seemingly interminable presidential campaign is a modern phenomenon. It originated out of widespread frustration with the control that national parties used to wield over the selection of candidates. But changes to election procedures, along with media coverage that started to depict the election as a horse race, have also contributed to the trend.

Wresting power from party elites

For most of American history, party elites determined who would be best suited to compete in the general election. It was a process that took little time and required virtually no public campaigning by candidates.

But beginning in the early 20th century, populists and progressives fought for greater public control over the selection of their party’s candidates. They introduced the modern presidential primary and advocated for a more inclusive selection process of convention delegates. As candidates sought support from a wider range of people, they began to employ modern campaign tactics, like advertising.

Nonetheless, becoming the nominee didn’t require a protracted campaign.

Consider 1952, when Dwight Eisenhower publicly announced that he was a Republican just 10 months before the general election and indicated that he was willing to run for president. Even then, he remained overseas as NATO commander until June, when he resigned to campaign full time.

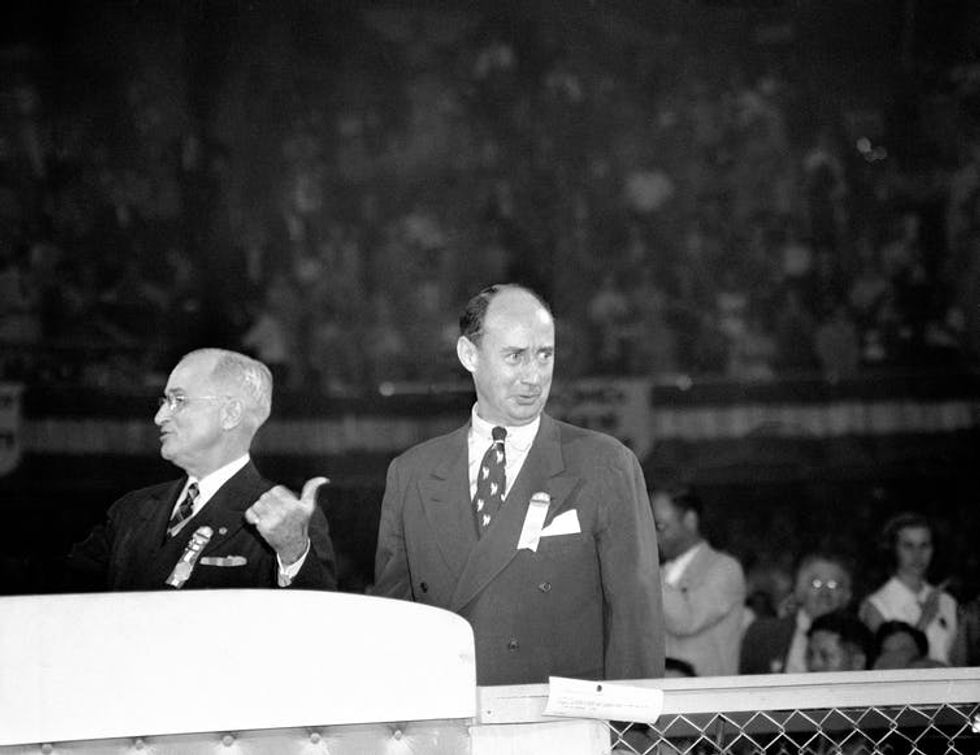

President Harry S. Truman points to Adlai E. Stevenson, as he introduces him at the 1952 Democratic convention in Chicago.

On the Democratic side, despite encouragement from President Truman, Adlai Stevenson repeatedly rejected efforts to draft him for the nomination, until his welcoming address at the national convention in July 1952 – just a few months before the general election. His speech excited the delegates so much that they put his name in the running, and he became the nominee.

And in 1960, even though John F. Kennedy appeared on the ballot in only 10 of the party’s 16 state primaries, he was still able to use his win in heavily Protestant West Virginia to convince party leaders that he could attract support, despite his Catholicism.

A shift to primaries

The contentious 1968 Democratic convention in Chicago, however, led to a series of reforms.

That convention had pitted young anti-war activists supporting Eugene McCarthy against older establishment supporters of Vice President Hubert Humphrey. Thousands of protesters rioted in the streets as Humphrey was nominated. It revealed deep divisions within the party, with many members convinced that party elites had operated against their wishes.

The resulting changes to the nomination process – dubbed the McGovern-Fraser reforms – were explicitly designed to allow rank-and-file party voters to participate in the nomination of a presidential candidate.

States increasingly shifted to public primaries rather than party caucuses. In a party caucus system – like that used in Iowa – voters meet at a designated time and place to discuss candidates and issues in person. By design, a caucus tends to attract activists deeply engaged in party politics.

Primaries, on the other hand, are conducted by the state government and require only that a voter show up for a few moments to cast their ballot.

As political scientist Elaine Kamarck has noted, in 1968, only 15 states held primaries; by 1980, 37 states held primaries. For the 2020 election, only Iowa and Nevada have confirmed that they’ll hold caucuses.

The growing number of primaries meant that candidates were encouraged to use any tool at their disposal to reach as many voters as possible. Candidates became more entrepreneurial, name recognition and media attention became more important, and campaigns became more media savvy – and expensive.

It marked the beginning of what political scientists call the “candidate-centered campaign.”

The early bird gets the worm

In 1974, as he concluded his term as Governor of Georgia, just 2% of voters recognized the name of Democrat Jimmy Carter. He had virtually no money.

But Carter theorized that he could build momentum by proving himself in states that held early primaries and caucuses. So on Dec. 12, 1974 – 691 days before the general election – Carter announced his presidential campaign. Over the course of 1975, he spent much of his time in Iowa, talking to voters and building a campaign operation in the state.

Jimmy Carter speaks to a crowd of supporters at a farm in Des Moines, Iowa.

By October 1975, The New York Times was heralding his popularity in Iowa, pointing to his folksy style, agricultural roots and political prowess. Carter came in second in that caucus – “uncommitted” won – but he yielded more votes than any other named candidate. His campaign was widely accepted as the runaway victor, boosting his prominence, name recognition and fundraising.

Carter would go on to win the nomination and the election.

His successful campaign became the stuff of political legend. Generations of political candidates and organizers have since adopted the early start, hoping that a better-than-expected showing in Iowa or New Hampshire will similarly propel them to the White House.

Other states crave the spotlight

As candidates tried to repeat Carter’s success, other states tried to steal some of Iowa’s political prominence by pushing their contests earlier and earlier in the nomination process, a trend called “frontloading.”

In 1976, when Jimmy Carter ran, just 10% of national convention delegates were selected by March 2. By 2008, 70% of delegates were selected by March 2.

When state primaries and caucuses were spread out in the calendar, candidates could compete in one state, then move their campaign operation to the next state, raise some money and spend time getting to know the activists, issues and voters before the next primary or caucus. A frontloaded system, in contrast, requires candidates to run a campaign in dozens of states at the same time.

To be competitive in so many states at the same time, campaigns rely on extensive paid and earned media exposure and a robust campaign staff, all of which require substantial name recognition and campaign cash before the Iowa caucus and New Hampshire primary.

Ironically, the parties have exacerbated these trends in 2016 and 2020, using the number of donors and public polls to determine who is eligible for early debates. For example, to earn a place on the stage of the first Democratic debate in June, candidates had to accumulate at least 65,000 donors or 1% support in national polls.

So that’s how we got to where we are today.

A century ago, Warren Harding announced his successful candidacy 321 days before the 1920 election.

This cycle, Maryland Congressman John Delaney announced his White House bid a record 1,194 days before election.![]()

Rachel Caufield, Associate Professor of Political Science, Drake University

This article is republished from The Conversation under a Creative Commons license. Read the original article.