Warning: This article contains discussion of suicide, accounts of self-harm and eating disorders and may be triggering for some readers. Have tips or internal documents about tech companies? Email techtips@rawstory.com.

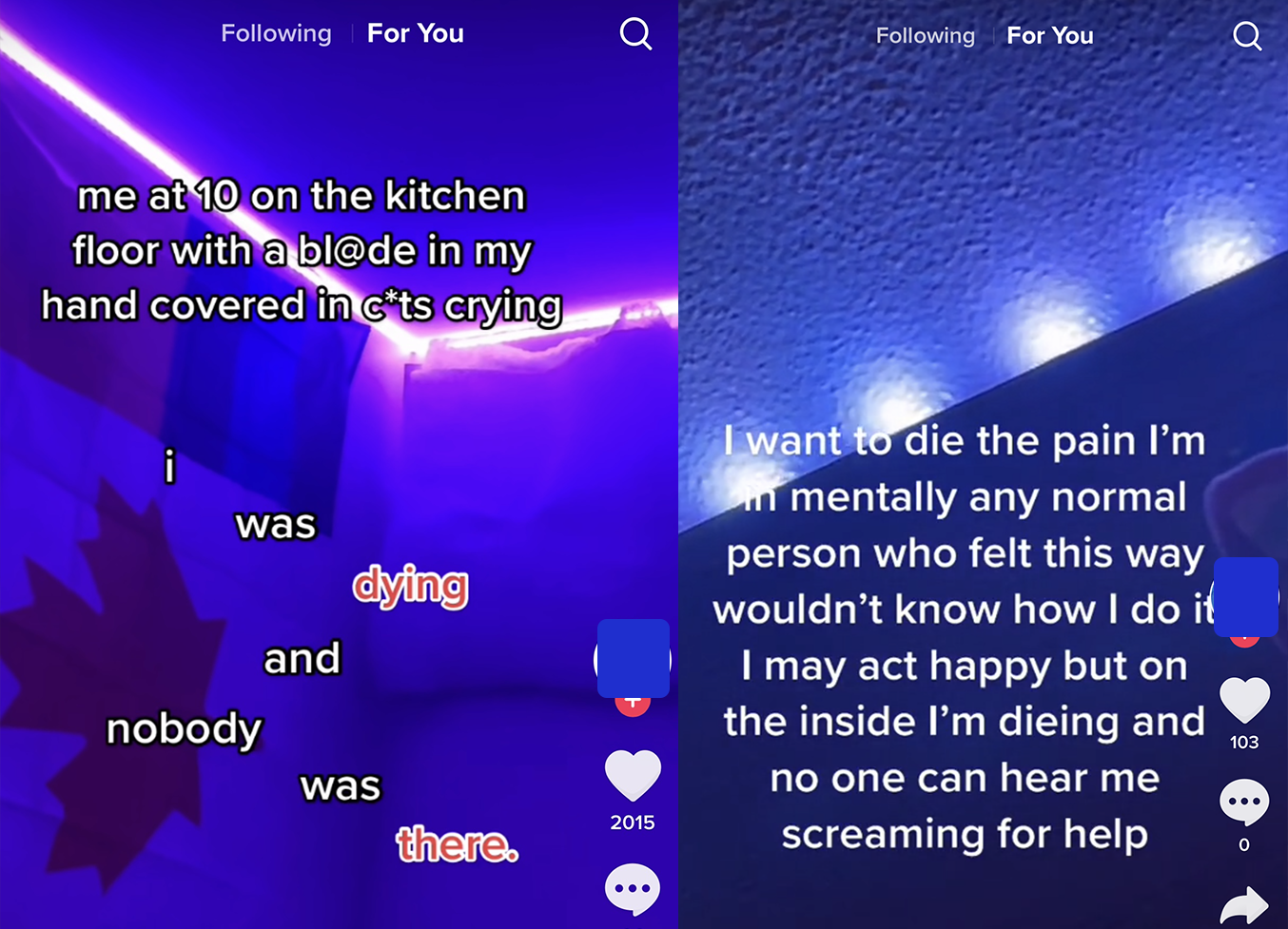

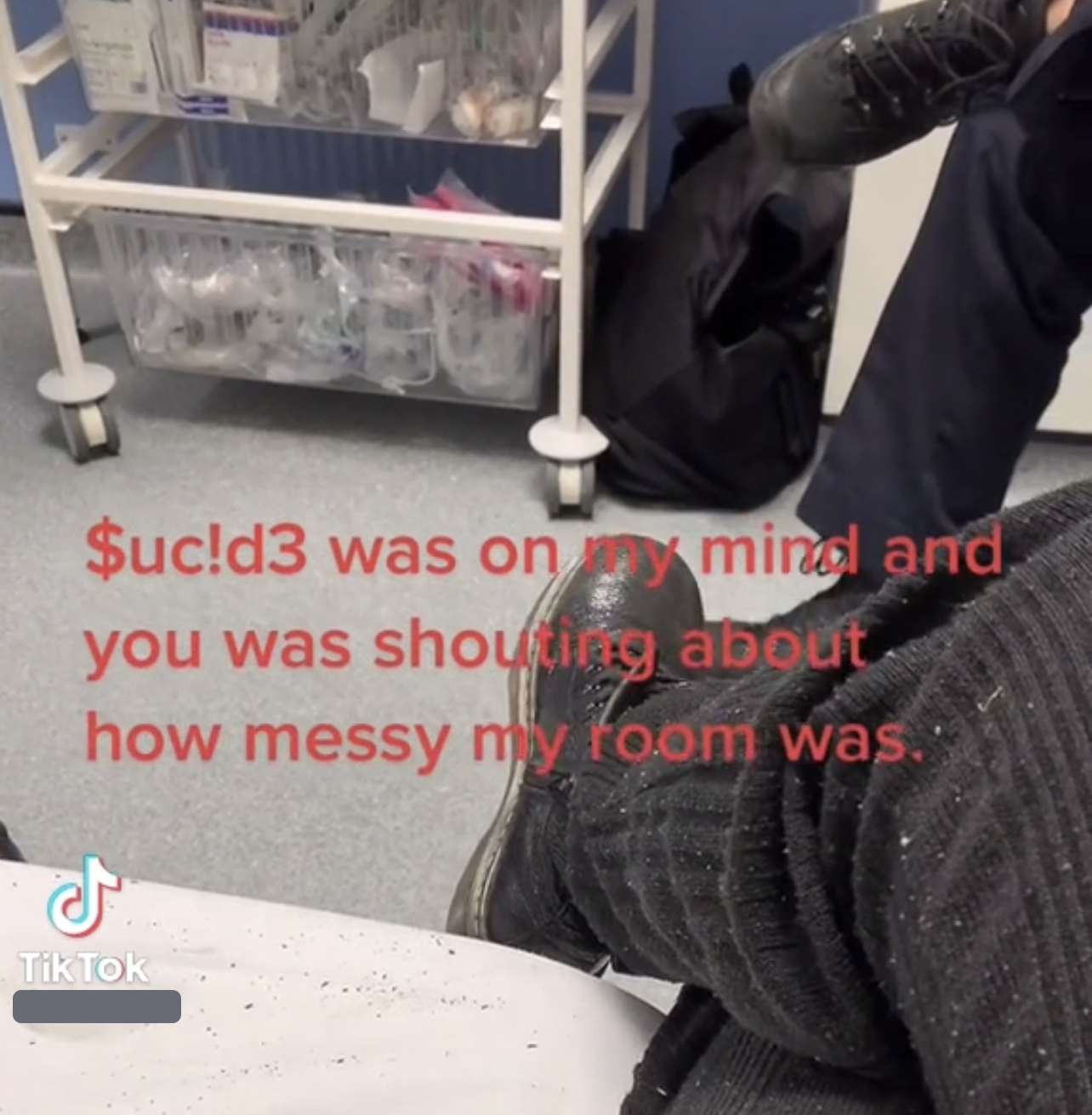

"Suicide was on my mind, and and you was shouting about how messy my room was." "I'll put your name on a bullet, so that everyone will know that you were the last thing that went through my head."

"I think 15 years is enough on this earth."

Thus began Raw Story's odyssey into #paintok, the dark netherworld of self-harm content that social media app TikTok makes available to young teens. Just two days after creating an account for a 13-year-old user, the age of a typical American eighth grader, TikTok suggested a video of a person dangling one foot off the edge of a skyscraper, hashtagged "giving up."

In the following days, TikTok played videos of users discussing suicide attempts from hospital beds; children joking about using razor blades for self-harm; and videos showing young women hospitalized for anorexia. The vast constellation of videos shown to Raw Story's teen account demonstrate TikTok fails to police suicide and self-harm content the company has banned.

TikTok is a social media app that provides a stream of user-uploaded videos, recommending additional videos based on how long users watch certain ones. It's the second-most popular social media app for teens — a March 2021 Facebook report found teens spend "2-3X more time" on TikTok than they do on Instagram. The app is owned by Beijing-based ByteDance, and is China's most successful social media foray abroad.

It is hard to convey the haunting quality of the videos TikTok recommended in words. The children are often young, and the anguish on their faces is visceral. Some videos show cuts on faces, arms or legs, or offer captions like "me at 10 years old bl££ding down my thighs and wrists," or "me at 10 on the kitchen floor with a bl@de in my hand covered in c*ts." Frequently, users mouth sad lyrics alongside captions about self-harm. In one video, a teen tears up about a parent's addiction. In another, a user discusses a father who touches her, and asks other users if the touching is acceptable. In a third, a beaming girl shows off a pan of cupcakes iced "Happy Halloween." A green plastic "Boo!" pokes from the frosting of one of the cakes. The photo is captioned, "Me at 12 when the @buse finally stopped."

Young women shared photos of themselves in ambulances, emergency rooms and hospital beds. At least a dozen posted videos with feeding tubes in their noses. Several Raw Story staff members shown the videos were traumatized.

TikTok's videos suggested to our eighth grade account were grim. They included multiple videos posted by young adults about their apparent suicide attempts. "This is what a failed attempt feels like," reads one caption. Another on the same account stated, "Nobody cared or noticed until I was in the hospital half dead." Comments include users discussing the shame they felt after trying to take their own lives.

"I once tried at school before school started," wrote one user, "but I was to [sic] scared and high up so I just called 911 to get me down because I didn't know how to get down myself."

Raw Story gave TikTok a video of one suicide attempt-posting user, which has 296,000 views. TikTok declined to take it down. After receiving a Google Drive of the videos, a company spokesperson declined to comment.

Testifying yesterday to a Senate subcommittee on consumer protection, TikTok's head of public policy for the Americas, Michael Beckerman, said the company was committed to protecting its young users.

"I'm proud of the hard work that our safety teams do every single day and that our leadership makes safety and wellness a priority, particularly to protect teens on the platform," Beckerman said. "When it comes to protecting minors, we work to create age-appropriate experiences for teens throughout their development."

Beckerman emphasized measures the company has introduced for parents, including Family Pairing, which allows parents to set restrictions on teen accounts. He noted user accounts under 16 cannot send direct messages, and said some of TikTok's efforts to protect teens were industry-leading.

TikTok declined to say whether Beckerman was shown videos Raw Story provided prior to his Senate appearance.

TikTok no longer allows users to search for suicide-related videos directly. A search for "suicide" returns a "click to call" button for the National Suicide Prevention Lifeline, and comprehensive resources for suicide prevention on TikTok's website. Last year, the company updated its policies, adding resources for users who search for terms like "selfharm" or "hatemyself." TikTok received higher marks than YouTube and Twitter in a recent Stanford Internet Observatory review of various platforms' policies on self-harm, though the study didn't address companies' efforts to remove content.

But TikTok's recommendation algorithm leads users who dwell on sad content to a universe of adolescent distress. On the first day of opening the teen account, Raw Story paused on police and military videos. TikTok then played videos about guns and eventually, depression. Raw Story stopped to read memes about depression, and eventually received videos about self-harm.

Many videos TikTok played showed users sharing personal experiences. But they also normalized self-injurious behavior. Videos of women with feeding tubes in their noses and posts about cutting were so common that eventually only videos of young girls in hospital beds stood out.

Just three days after opening an account with the age of an eighth grader, TikTok recommended a video with a scene from a recent Netflix show where two parents react to the attempted suicide of a child. One adult is heard screaming, "Call 911, call 911," while another wails, "Not my baby, not my baby."

Another TikTok recommendation showed a gaunt young woman alongside the words, "Waking up with 2 IVs and doctors surrounding me saying they found me passed out on the bathroom floor." A third said, "At 10, I was sad and mommy told me that it was just in my head. At 18, I don't want to live and mommy tells me that it is just in my head. She is right, it is all 'in my head' and that's the problem."

Raw Story's teen account received a flood of content from accounts named PainHub — whose logo mimics the pornography website, PornHub – showing upset people alongside quotes about suicide.

TikTok's rules about self-harm are vague.

"We do not allow content depicting, promoting, normalizing or glorifying activities that could lead to suicide, self-harm, or eating disorders," TikTok's guidelines say. "However, we do support members of our community sharing their personal experiences with these issues in a safe way to raise awareness and find community support."

Samantha Lawrence, a pediatric nurse practitioner and pediatric mental health specialist in St. Petersburg, Florida, said she's witnessed TikTok's dangers firsthand.

"I know of a child sent pornographic videos by an adult after posting dance videos," Lawrence said. "She was nine, and had her own iPhone which was supposed to have certain safety settings in place. We're learning how much slips through the cracks."

Currently, TikTok is facing criticism from educators about TikTok "challenges," trending content that encourages teens to record destructive activities and post them on the platform. Earlier this month, police arrested a teen who punched a 64-year-old disabled teacher in the face. Lawrence said she's seen the impact of TikTok challenges up close.

"I have had teenagers who have been acutely, severely or permanently damaged from TikTok challenges," she said, including children "physically damaged from TikTok challenges with permanent disfigurations."

TikTok bars posts about "dangerous acts or challenges," but it hasn't stopped users from acting them out. Two weeks ago in China, where TikTok's parent operates a sister app, an influencer livestreamed her own suicide to her 760,000 followers, following comments like "Good for You." Earlier this year, a Pakistani teenager died instantly after firing a gun at his head he didn't realize was loaded.

Lawrence said she hadn't seen patients exhibiting self-harm resulting from using TikTok. "But a child who was already suicidal may feel more invited to commit self harm with ideas presented on TikTok," she said. "Often my patients who cut know other individuals who cut, so there does seem to be a social influence."

Research recently published in the Journal of Youth and Adolescence found that social media use had little effect on boys' suicidality risk, but girls who used social media for two to three hours a day—when they were roughly 13 years old—who later increased their use, were at higher risk for suicide as developing adults.

The study's author, Brigham Young Professor Sarah Coyne, noted she has a young daughter who joined TikTok this year.

"Thirteen is not a bad age to begin social media," Coyne said in an article about the study. "But it should start at a low level and should be appropriately managed." She suggested parents limit young teens' usage to 20 minutes a day, maintain access to their accounts, and talk with teens often about what they see. Over time, she said, teens can increase their usage and independence.

Alan Blotcky, a psychologist in Birmingham, Alabama, said TikTok might offer some positives for struggling teens. "People feel connected," he said. "They establish relationships with people online."

But Blotcky worries about teens who view social media as a substitute for treatment.

"Some people who legitimately have psychological and psychiatric problems don't go get professional help," he said. "Instead they spend a lot of time on social media thinking it's a substitute for professional help, and it's not."

Some content TikTok recommended showed users literally begging for aid, with captions like, "I've been so Depressed and life has been so Complicated, Please Help." Others recounted struggles around self-worth.

"I always do something wrong, mess up and make a mistake, it's always my fault, it's never enough, i am never enough," one remarked.

TikTok also recommended distressing film clips, including Robin Williams talking about dying in the movie, The Angriest Man in Brooklyn.

"By the time you see this I'll be dead," Williams says.

Williams committed suicide in 2014.

Lawrence said TikTok can expose teens to dangerous information when they are most impressionable. Teens are "still developing the prefrontal cortex of the brain which helps regulate emotions and impulse control," she said.

"Teenagers are more likely to struggle with emotions and impulse control than adults," she continued. "The pandemic has caused documented increases in depression and anxiety in youth, and with TikTok as their outlet, this is going to expose these mental health struggles to the rest of their peer group, opening their world to the suicidal struggles of other youth which can lead to other challenges."

Blotcky concurred. "Children don't have the cognitive abilities or the emotional maturity to take into account the things that we're talking about," he said.

Devorah Heitner, author of Screenwise: Helping Kids Thrive (and Survive) in Their Digital World, says parents should encourage teens who see challenging content to discuss it.

"Parents should try not to act shocked and shouldn't get punitive about what kids have seen," Heitner said. "We need to stay calm or they won't tell us things. Say, 'Tell me what you saw, let's understand it. Let's talk about what you're feeling.'"

"We have to make them make sense of it," she added. "And listen to their feelings about it. If it's really traumatic, they may need to talk to a counselor at school or a therapist in the community."

Blotcky agreed. "For children and teenagers it behooves parents to monitor what their children are doing on social media," he added. "Kids can find themselves involved in things online that spiral out of hand."

Beyond her patients, Lawrence frets about the long term impacts of social media and what its glamorization means. YouTube, Facebook and Instagram rake in immense profits by running advertising alongside content created by others. TikTok boasts two billion downloads. The impacts of the companies' algorithmic suggestions are hardly clear.

"I read that more kids today want to be a YouTube star than an astronaut," Lawrence said. "How did we get such a young crowd living inside of an application in this manner, and why? In what ways does this help us as a society? What are the benefits or harms of this? We just don't know."

If you or someone you know needs help, call the National Suicide Prevention Lifeline at 800-273-TALK (8255). You can also text a crisis counselor by messaging the Crisis Text Line at 741741.

John Byrne holds direct investments in Softbank, one of TikTok's early investors; Alibaba; Facebook; Alphabet, the owner of YouTube; Microsoft; and Tencent. He is the founder of Raw Story.