Warning: This article contains descriptions of individuals discussing self-harm and eating disorders and may be triggering for some readers. Raw Story has included only mild photos, as images of actual teens in crisis on TikTok cannot be published. Have tips about TikTok or internal documents about tech companies? Email techtips@rawstory.com.

ATLANTA — In August, Apple, the world's largest company by market capitalization, announced it would begin scanning uploaded photos for child pornography. The company also said it would warn teens who send nude photographs about the dangers of sharing sexually explicit images.

"At Apple, our goal is to create technology that empowers people and enriches their lives — while helping them stay safe," Apple said in a release. "We want to help protect children from predators who use communication tools to recruit and exploit them."

And yet, weeks after a major report revealed that social media app TikTok recommends bondage videos to minors, including clips about role-playing where people pretended to be in relationships with caregivers, Apple continues to buy ads targeting young teens. The ads appeared just prior to Raw Story's Wednesday report that found TikTok serves teens videos about suicide and self-harm.

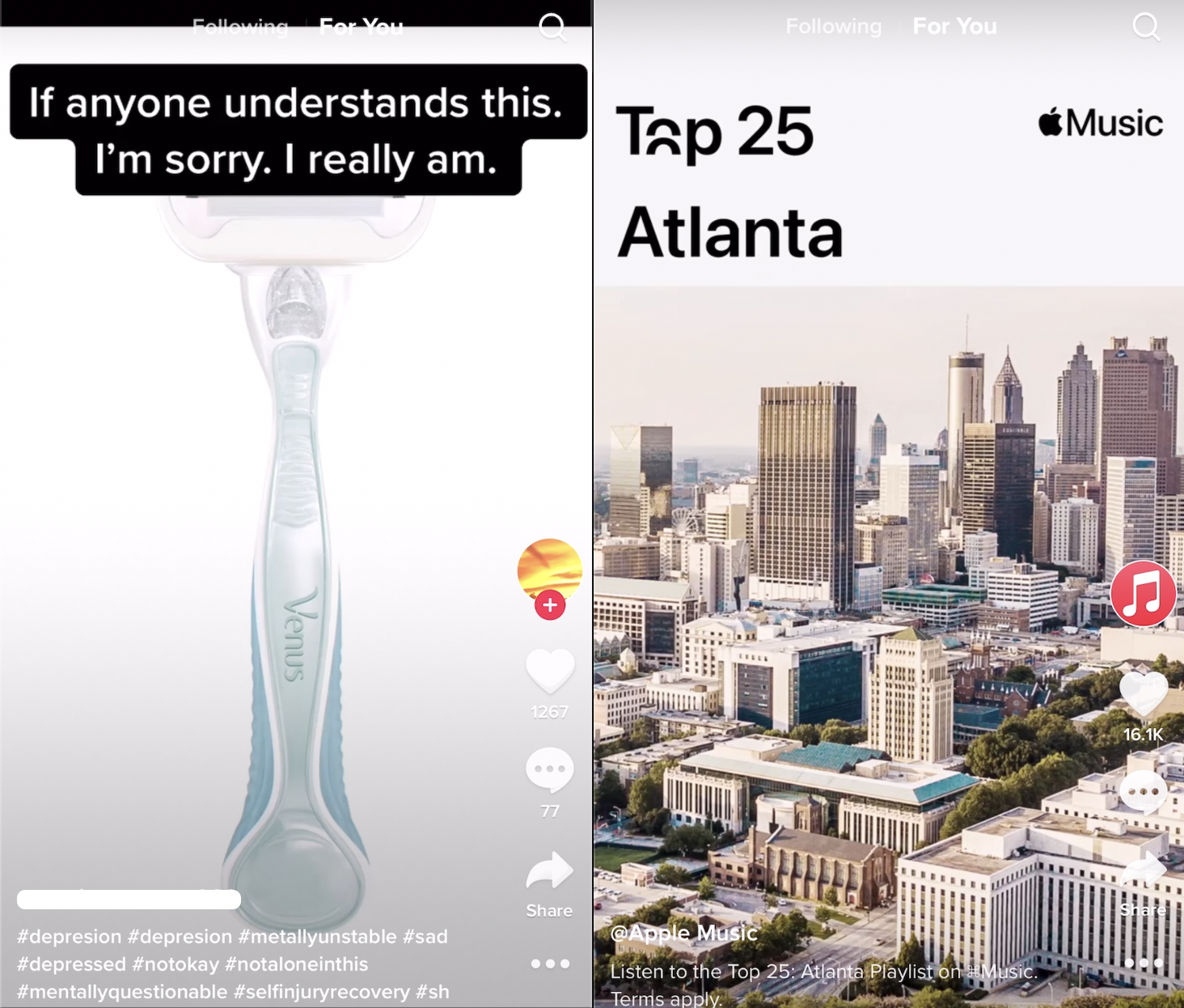

In a clear example of Apple's peril, Raw Story found an Apple Music ad adjacent to two videos about users cutting themselves. One displayed a razor, antibiotic ointment, gauze, and sweatshirts used to hide scars. In the second video, a girl in dark eye makeup appeared beneath the caption, "My mom when she found me bl33ding."

"If I was as pathetic as you are, I would have killed myself ages ago," a voice says, mimicking a parent.

TikTok provides a stream of user-uploaded videos and recommends additional clips based on which videos users watch. The app is owned by the Beijing-based company ByteDance, and is popular among young teens. In its app store, Apple calls TikTok a "must-have" and "essential."

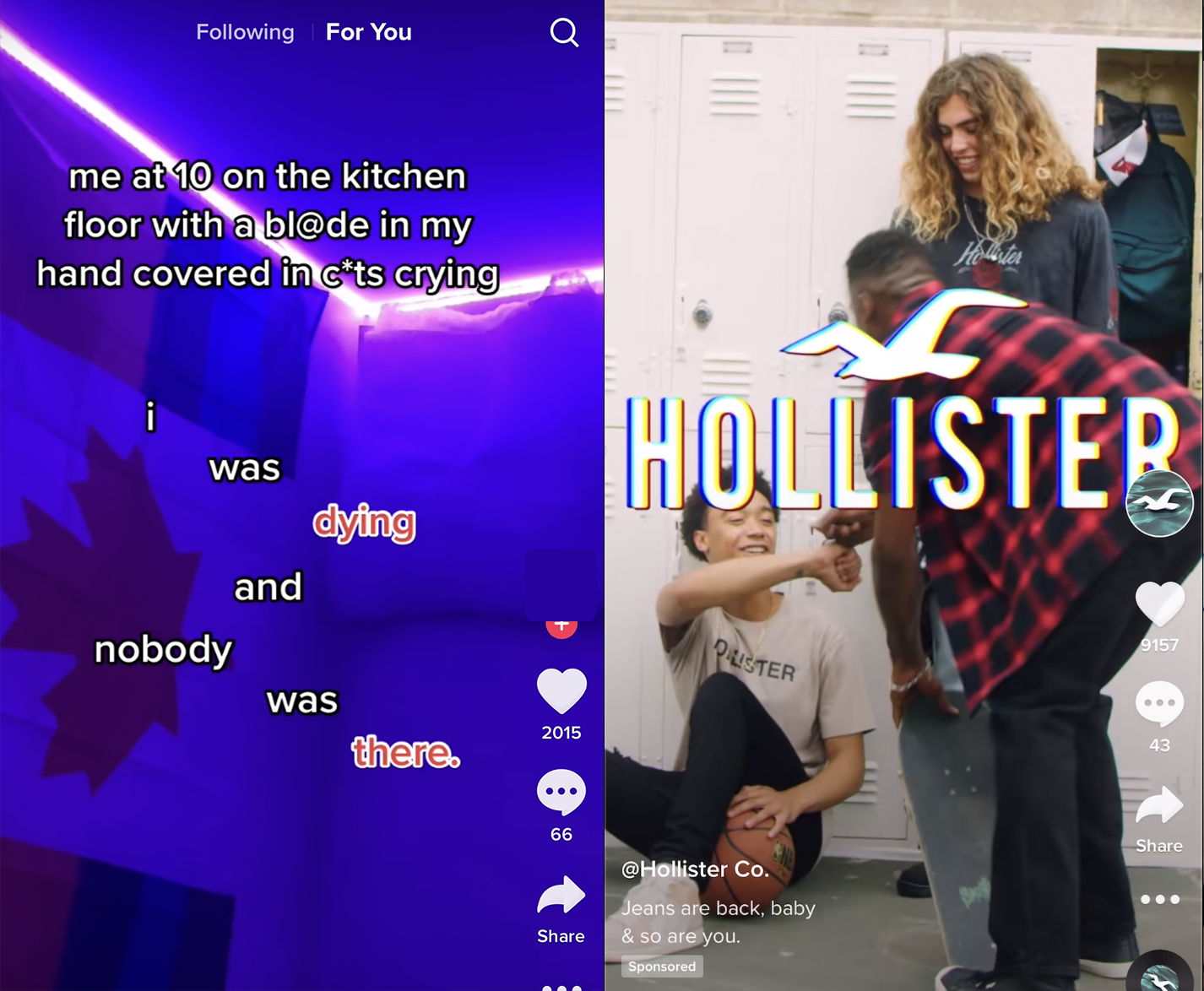

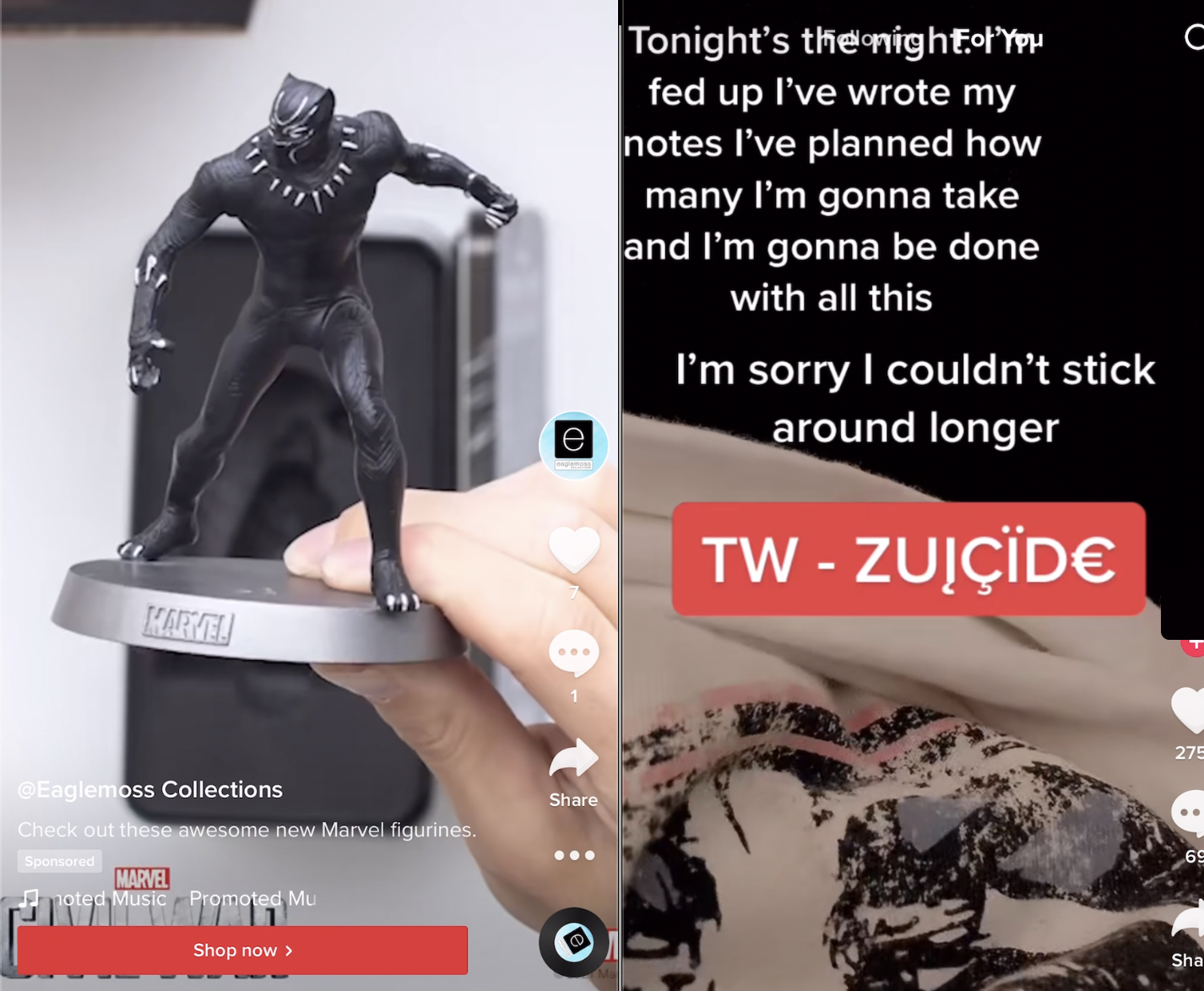

Apple's ad was among dozens showing that TikTok monetizes videos users post about self-harm, eating disorders and suicide. Other major brands included Amazon, Hollister and Target. An ad for Disney's Marvel action figure toys followed a young woman hospitalized after a suicide attempt. A Target ad ran next to a video of a girl discussing the shame of cutting herself. An Amazon ad accompanied a girl joking about razors. "Jeans are back baby," quipped Hollister, following a young woman mentioning crying herself to sleep in a mental hospital.

The juxtaposition of major American brands alongside teens discussing self-harm was stunning.

The videos demonstrate the challenges of policing user-uploaded content, and the lengths advertisers will go to reach children, whose brand habits can follow them for life.

Apple, Disney, Marvel and Amazon did not reply to multiple requests for comment. Each was sent videos of their ads running alongside videos about self-harm or suicide. Target didn't comment for the record. TikTok, which received a dozen clips, declined to comment.

Abercrombie & Fitch, which owns the Hollister brand, said it reached out to TikTok about the videos and that it doesn't place ads beside "harmful and dangerous content." Raw Story confirmed the image below alongside Hollister's ad has been taken down.

"We are committed to working with all of our social media partners to ensure content is safe and appropriate for our audiences," spokeswoman Kara Page said. "We followed up with TikTok and can confirm most of these videos were removed for violating the platform's community guidelines."

Advertisers often find their appeals placed beside problematic content online. And the issue of how to keep teens safe online is complex — Apple delayed its child protection measures after blowback from privacy advocates. But the brands soliciting young teens on TikTok continue to do so despite a September Wall Street Journal report documenting drug and sex videos TikTok serves to minors.

Just before an ad for Target promoting Arianna Grande's Cloud perfume, a young girl lipsyncs to Billie Eilish's "Everything I wanted." "Nobody cried, nobody even noticed," she mouths, in front of a caption, "When I wore long sleeves all through high school and would constantly hold my arms."

An Amazon Prime ad featuring actress Elizabeth Bowen was followed by a girl joking about cutting herself. The girl posted about razors and getting a blood test, suggesting the razor was jealous. The girl's account is filled with videos about surviving an eating disorder. One video shows her with a feeding tube up her nose.

Hollister's ad appeared in the same sequence of videos with the words, "Me at 10 on the kitchen floor with a bl@de in my hand covered in c*ts crying." Another displayed a young woman in a hospital recovering from an eating disorder with the caption, "About to get my [feeding] tube placed 😭."

Hollister, which said it had asked that the videos be taken down, said they were dedicated to teens' health and well-being.

"TikTok and Hollister have a shared dedication to the safety, health and well-being of our customers and communities," spokeswoman Kara Page said. "In 2020, we launched World Teen Mental Wellness Day, which occurs annually on March 2 and aims to disrupt the stigma against teen mental wellness. Hollister's mental wellness support also continues year-round through the Hollister Confidence Project, an initiative and grant program dedicated to helping teens feel their most comfortable, confident and capable."

TikTok recommended videos of young adults discussing cutting themselves to an account Raw Story set up for a user it said had just turned thirteen. Raw Story initially paused on military videos; TikTok then recommended depression memes, followed by suicide and self-harm clips. As Raw Story noted Wednesday, some videos TikTok recommends show young girls in hospitals after suicide attempts, with cuts on faces, arms or legs. Others show anorexic girls with feeding tubes in their noses. Captioned posts include phrases like "me at 10 years old bl££ding down my thighs and wrists," or "me at 10 on the kitchen floor with a bl@de in my hand covered in c*ts." In one video, a user posts about a father who touches her, and asks other users if the touching is acceptable.

TikTok placed one ad for Marvel's Black Panther and Captain America toys near a video the app recommended about cutting and suicide. The latter was captioned, "Tonight's the night. I've wrote my notes. I've planned how many I'm gonna take and I'm gonna be done with all this. I'm sorry I couldn't stick around longer." The video flashed the word "ZUICÏD€."

News that TikTok is running Apple, Amazon and Target's ads beside young users' videos about suicide comes just weeks after TikTok's first virtual product event, where it discussed new brand safety tools for advertisers.

According to Ad Age, the company promised "machine-learning technology that classifies video, text, audio and more based on the level of risk," allowing "advertisers to make decisions about which kind of inventory they'd like to run adjacent to and avoid."

"This is especially important as TikTok wants to maintain a healthy atmosphere that promotes shopping," the site said, noting that the company hoped to "fuel in-app shopping ahead of the holidays."

Tech companies struggle to police the user-generated content they profit from. Last year, advertisers cut spending on Facebook over hate speech, forcing the company to invest in tightening controls on extremism. In 2019, Google's YouTube suffered an exodus of advertisers over pedophilia comments, just two years after Google found itself embroiled in a similar scandal around white nationalists and Nazism. According to Google, most of the advertisers returned.

Much of the focus on teen safety has centered on the platforms themselves. But major U.S. companies continue to fund platforms that feed troubling content to teens, despite odious content surfacing time and time again.

Alan Blotcky, a clinical psychologist from Birmingham, Alabama who works with teens and families, called the companies' advertising "shameful."

"Advertisers should be ashamed of themselves running ads adjacent to content that is likely to be harmful and even toxic to some vulnerable children and teens," Blotcky said. "Making money pales in comparison to the social responsibility of protecting our youth."

Josh Golin, Executive Director of Fairplay, a nonprofit that fights marketing to children, said advertisers should take responsibility for where their ads run.

"I think they absolutely have a responsibility not to advertise in any way adjacent to self-harm and other concerning content," Golin said. "And if TikTok is incapable or unwilling to stop putting their ads next to that content, they should pull their ads."

Fairplay recently filed an FTC complaint against TikTok, alleging that it continues to collect children's personal data. TikTok's owner, ByteDance, settled a FTC complaint in 2019 alleging it illegally collected data from children for $5.7 million.

"Advertisers, like the platforms, are putting what's good for their profits ahead of what's good for children," Golin said. "They see a huge teen audience and they are looking to monetize them regardless of whether their ads are appearing with toxic content."

Testifying Tuesday to a Senate subcommittee on consumer protection, TikTok's head of public policy for the Americas, Michael Beckerman, defended the company's efforts to protect its young users.

"I'm proud of the hard work that our safety teams do every single day and that our leadership makes safety and wellness a priority, particularly to protect teens on the platform," he said. "When it comes to protecting minors, we work to create age-appropriate experiences for teens throughout their development."

TikTok's rules about self-harm depend on how they are interpreted. Many of the videos shown to Raw Story's teen account appear to comply with the platform's rules.

"We do not allow content depicting, promoting, normalizing, or glorifying activities that could lead to suicide, self-harm, or eating disorders," the guidelines say. But, they add, "We do support members of our community sharing their personal experiences with these issues in a safe way to raise awareness and find community support."

Stanford's Internet Observatory rated TikTok's self-harm guidelines ahead of YouTube, Twitter and Instagram. The study did not examine the platforms' effectiveness in removing content.

TikTok recently announced it would let its algorithms try to automatically remove harmful content. Previously, the company required user-flagged videos to undergo human review. TikTok has said no algorithm will be completely accurate in policing a video because of the context required.

While TikTok represents China's most successful social media foray on American soil, its parent company, Bytedance, has struggled with what content to allow. After relaxing an early rule that restricted skin exposure and bikinis, the platform was inundated with sexualized content.

Few videos alongside the ads conveyed positive sentiment, but at least one seemed to suggest TikTok might offer some productive mental health content for teens. Titled "What Teen Mental Hospitals Are Really Like," a young contributor discusses the challenges of her first night in the hospital. The video is tagged with the hashtags "mental health community" and "wellness hub."

The video was followed by a girl talking about why she feels she's the family disappointment, and another captioned, "My entire life, that's been nothing but me trying to run away from my loneliness and tears."

Shortly afterward, Hollister ran an ad for jeans.

John Byrne holds direct investments in Amazon; Alibaba; Softbank, one of TikTok's early investors; Alphabet, the owner of Google and YouTube; Facebook; Microsoft; and Tencent. He is the founder of Raw Story.

Prior articles in this series:

- #Paintok: The bleak universe of suicide and self-harm videos TikTok serves young teens

- TikTok recommends gun accessories and serial killers to 13-year-olds