Warning: This article contains graphic descriptions of eating disorder treatments and hospitalizations, and may be triggering for some readers. To avoid images referencing self-harm, Raw Story included only brands' ads in this piece.

Twenty eight years ago, an enterprising ad executive working for the California Milk Processor Board came up with a slogan that was nearly discarded. "It's not even English," one executive recalled. Agency staff considered it lazy, not to mention grammatically incorrect.

The slogan — "Got Milk?" — became one of the most recognizable advertising pitches of modern times.

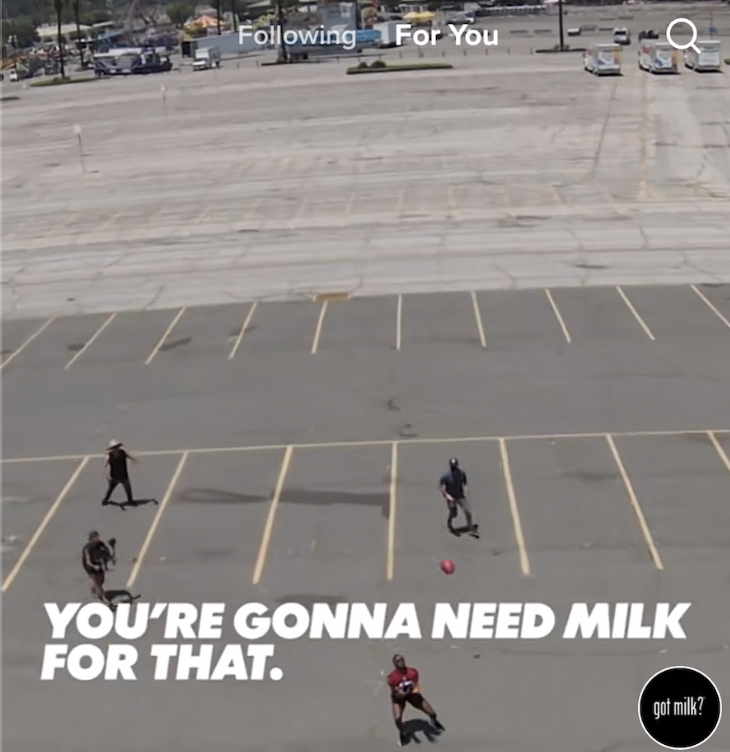

Today "Got Milk?" appears alongside TikTok videos of young girls glamorizing anorexia and nutritional feeding tubes. "You're gonna need milk for that," quips one ad in a stream of videos that includes a young girl with a tube in her nose. Another ad appears near a video of a girl whose feeding tube is taped to her face with a Minions bandage. The Minions are impish yellow animated characters from children's films.

Got Milk's ad was one of many TikTok placed between graphic videos of young adults struggling with mental illnesses. A Raw Story investigation found at least three dozen young women connected to feeding tubes alongside ads for Nike, Forever 21, Clearasil and other American brands. TikTok paired advertisers with a girl toasting to her feeding tube in a hospital; a young woman joking about being resuscitated after her heart stopped; and a video posted by a user about an IV inserted near the heart for nutrition, set to the 1981 hit, " Tainted Love."

The Milk Processor Board, which owns "Got Milk?", did not respond to a request for comment.

TikTok is a social media app that plays user-uploaded post short videos. Owned by the Beijing-based company ByteDance, the app recommends videos based on what the user pauses to watch. Typically, TikTok suggests harmless clips like teens performing silly dances. Those who pause to watch videos about depression, however, can end up mired in a galaxy of self-harm. The pairing of teen-focused brands with hospitalized adolescents demonstrates TikTok's difficulties in policing user-uploaded content and the lengths companies go to target teens, whose brand affinities can follow them for life.

TikTok played all of these videos on an account Raw Story set up with a birthday indicating the user had just turned 13 years old. The same account received ads for Pizza Hut, Taco Bell and Dominos.

Advertisers can't always control what their ads appear next to. But they can pick where they advertise. The brands soliciting young teens on TikTok do so despite reports the app serves drug and sex videos, ads for weapons accessories, and suicide-related videos to minors.

More than 30 million Americans will suffer from some type of eating disorder in their lifetime, research shows. The illness has the second highest mortality rate of all mental illnesses after opiate addiction. According to the Eating Disorders Coalition, one American dies from the disease every hour.

"We've seen three times the number of eating disorders over quarantine," said Dr. Stephanie Zerwas, a professor at the UNC Department of Psychiatry who was formerly the Clinical Director of the Center of Excellence for Eating Disorders. "And when I talk to teenagers and ask why they started to worry about eating and their body, they say, 'I was on my phone a lot.' Seeing all of this stuff on TikTok really led them to feel like this is attractive, this is interesting, maybe this is something I can do."

Nike's ads appeared beside a video about a failed suicide attempt and an interview with a mother about her daughter's self-harm.

One of Nike's ads, "This Is How We Play," adjoined a TikTok-recommended anorexia recovery montage. The clip showed a young woman doing a shot despite having a feeding tube in her nose. Nike's swoosh logo followed. Beside a second Nike ad, a young woman with a feeding tube posted, "Hold me I'm falling apart."

Also in this series: Apple, Amazon and Marvel: How TikTok monetizes teens cutting themselves and #PainTok: The bleak universe of suicide and self-harm videos TikTok serves young teens. Have tips about TikTok or internal documents from tech companies? Email techtips@rawstory.com.

Nike did not respond to repeated requests for comment. The brand is not new to eating disorder scandals; Mary Cain, the youngest American track and field athlete to make a World Championships team, accused a company coach of forcing her to diet until her body started breaking down in 2019. Nike's coach was banned from professional sports, and its CEO later resigned.

TikTok placed a Cinnabon ad near a clip of a girl discussing her struggles with anorexia. "When the fear of gaining weight turns into a fear of going bald," the caption said. TikTok then displayed a woman sitting on bed in pajamas. Her caption: "I jumped from a bridge in June 2021 to show my mental health it won. It's been 4 months and I still need another surgery after already having 4."

Cinnabon's ad featured a man dancing with the tagline: "Chocolate chip cookie with a cinnamon roll inside!"

Domino's Pizza joined a young user discussing a partner asking to skip a condom. Taco Bell championed burritos before a video of a young woman with a black eye in a hospital bed who claimed she'd fractured her spine. The ads preceded users discussing rape, mental hospitalization, and a girl refencing touching her friend's body in a casket for the last time.

Additional videos TikTok recommended showed young women so emaciated that their skulls appeared clearly evident. One woman posted about bulimia while appearing next to a toilet bowl, set to the music lyrics, "I picked my poison and it's you." The same music frequently accompanied users' self-harm videos featuring razor blades.

While some videos depicted recoveries, others blatantly promoted anorexia. In one video TikTok suggested, a girl spun and clapped to the words, "Me after getting diagnosed with chronic anorexia." In another, a girl danced despite a feeding tube dangling from her nose.

TikTok also paired fast fashion retailer Forever 21 with videos about suicide, starvation and mental hospitalization. The company's ads — which feature an exclusive collection with Pantone — display thin young men and women jumping and twirling around.

"You think you can hurt me?" one TikTok user asked after a Forever 21 ad. "I was inpatient for refeeding 4 bloody times." Another nearby video included a user discussing a father touching her, asking other users if it was acceptable.

One awkward pairing included an ad heralding the benefits of guacamole — "Supports the immune system," "Helps meet heart-healthy goals" — alongside a woman discussing starving herself, which she said made her feel powerful and attractive.

Clearasil, the acne treatment, appeared adjacent to a TikTok video that was particularly grim. The user's "pinned" video recounted one young woman's hospitalization journey, which began with the girl in a messy bedroom, head down, in front of empty bottles. The image was followed by others of her in a hospital bed with a bruised eye, lacerations, and a feeding tube. It also included a photo of a rescue helicopter. Posted just two weeks ago, it had 172,000 views.

In another video, under the caption, "set up a feed with me," the woman mixed a feeding regimen and injected it. Dozens of videos showed the woman posing with a feeding tube in her nose.

The account vanished after Raw Story reported it to TikTok. The profile suggested TikTok may have previously erased another of her accounts. Raw Story found a third account that remains active.

TikTok declined repeated requests for comment. The platform has made efforts to address concerns it promotes eating disorders. In February, in announced it would direct searches for #edrecovery and phrases linked to the illness to the National Eating Disorders Helpline. It bars advertisers from promoting weight loss products to users under 18, and links searches that can spawn pro-anorexic content — such as "what I eat in a day" — to announcements about healthy body image.

News that TikTok is running Nike, Forever 21 and Cinnabon's ads beside young users' videos about self-harm comes just weeks after TikTok's first virtual product event, where it discussed new brand safety tools for advertisers.

TikTok announced last month it removed 81 million videos in a three month period this year for policy violations, revealing the massive scope of the company's challenges. This represented just one percent of total uploads, it said — meaning users upload, on average, 90 million videos a day.

Michael Beckerman, TikTok's head of Public Policy for the Americas, told a Senate committee last week that the platform is committed to protecting its young users.

"I myself have two young daughters and this is something I care about," Beckerman said. "I want to assure you we do aggressively remove content that you're describing that would be problematic for eating disorders and problem eating."

TikTok maintains it's trying to create a space for users to post about recovery. Experts, however, generally panned the company's efforts.

"TikTok is doing an awful job," Dr. Zerwas, the former Center of Excellence for Eating Disorders director said. "TikTok seems to either not care about policing that or be okay with just having that be part of their site to the detriment of teenagers."

"I have not heard of anyone who uses it to find inspirational or recovery focused content," she added.

In January, a study highlighted by the National Institutes of Health found that even recovery videos posted on TikTok had deleterious effects. "Our case shows how even these safer videos paradoxically lead the users to emulate these 'guilty' behaviors," the study said.

Christine Peat, the current director of the National Center of Excellence For Eating Disorders, noted that even medical practitioners are encouraged to avoid graphic images of patients in presentations. She said TikTok should remove content intended to have "shock value."

"Anything that is gratuitous in terms of how graphic it is, that would lean toward the glorification of eating disorders," Peat said.

Valuable recovery content, she added, should focus on individuals leading meaningful lives, not videos suggesting "symptoms were something to be worn with a badge of honor."

"If we're thinking about what a positive depiction of recovery might look like, it might be someone taking a photo of themselves at dinner with their friends, it might be a picture with a loved one on vacation," Peat said. "It might be a TikTok celebrating a promotion in their job. Any depictions of people leading a life that's worth living is incredibly helpful for recovery."

Dr. Zerwas didn't fault TikTok for creating eating disorders. But she noted that when a teen sees others with the illness, "it can seem normalized or even glamorized. People are looking for community and that sense of belonging, and so it feels a little seductive."

Eating disorder experts said TikTok should consult professionals to develop guardrails for content. "I think they should consult professionals in the same way that Hollywood has done," Peat said. "They have consultants for that content to make sure what's there is generally accurate." Professionals can help shape rules on "what the content should and shouldn't be in order to protect people who are vulnerable," she added.

Major U.S. brands continue to advertise on platforms that monetize harmful content, despite the companies' frequent scandals. Last year, brands paused ads on Facebook over hate speech. Advertisers also rebuked YouTube for failing to notice pedophilic comments in 2019, and Nazism in 2017. Google later said most advertisers returned.

Ian Russell, the father of a child who took her own life after getting lost in a depressive spiral of content on Instagram, called the companies' profiting from noxious content " monetizing misery."

"Are we comfortable with our kids being harmed and then tech leaders making money from that harm?" asked Bridget Todd, Communications Director for Ultraviolet, a womens' rights group that has criticized TikTok for not doing more to prevent eating disorders. "It baffles me that we've accepted that tech leaders can make money off of products that we already know are hurting our kids."

Advertisers "have a responsibility not to advertise in any way adjacent to self-harm and other concerning content," added Josh Golin, the Executive Director of Fairplay, a nonprofit that fights marketing to children. "If TikTok is incapable or unwilling to stop putting their ads next to that content, they should pull their ads."

Congress has taken note of social media's effects on teens' mental health. The issue drew more attention last month after a former Facebook employee leaked documents that showed it knew Instagram was harmful to teen girls.

"The stakes here are incredibly high — studies have found that eating disorders have one of the highest mortality rates of any mental illness," Sen. Tammy Baldwin (D-WI) told Raw Story in a statement. "I have worked in the Senate in a bipartisan way to ensure Americans can access treatment services for eating disorders, but more must be done to protect our kids from being exposed to content on social media platforms, whether it's TikTok, Facebook, or Instagram, that glorifies and promotes eating disorders."

Congressman Gus Bilirakis (R-FL), the Republican leader for the House Subcommittee on Consumer Protection and Commerce, joined three other Republicans last week in writing to TikTok's CEO demanding TikTok produce any research it has conducted on teens' mental health.

"The Big Tech industry continues to actively prioritize profits over the well-being of our children," Rep. Bilirakis (R-FL) said. "They've consistently proven they won't do the right thing unless required and I am deeply troubled by the relationship many of these companies have with China. We must hold them accountable by enacting common sense protections."

In September, the UK began enforcing a law requiring social media companies to employ "age appropriate design" when serving content to young users. Social media's teen-focused critics see it as a model. Two days before the bill went into effect, Instagram began requiring users to enter their birthday and introduced changes that limit advertisers' targeting of minors. Following the law's passage, TikTok announced it would stop sending push notifications in the evening to young teens, and YouTube said it would disable auto-play settings for children and add bedtime reminders.

Democrats have introduced similar legislation aimed at protecting users under 16. The so-called KIDS Act would ban auto-play settings for young teens and prohibit websites from amplifying violent or inappropriate content.

In lieu of legislation, however, critics say TikTok and advertisers have the most power to effect change.

"Advertiser pushback is probably where we're going to see the greatest opportunity to change this," Dr. Zerwas said. "It's really important that advertisers consider this and really be thinking carefully about whether they want a 'Got Milk' campaign to be associated with feeding tubes."

Bridget Todd, the activist, said she was cautiously optimistic.

"I really believe that there is time for TikTok to get this right," Todd said. "I'm genuinely excited to see where things land. The question is whether TikTok is going to rise to that opportunity to be a healthier space for youth."

John Byrne holds direct investments in Softbank, one of TikTok's early investors; Simon Property Group, a 37.5% owner of Forever 21; Alphabet, the owner of Google and YouTube; Facebook; Microsoft; Alibaba; and Tencent. Historically, he has been a donor to Democratic and Republican candidates for Congress, with the majority of his donations made to Democrats. He is the founder of Raw Story.